Most LLMs fold the moment you ask "are you sure?" We call it sycophancy. We measured it across 22 models. The gap between best and worst is 42% vs 89% hold rate under adversarial pressure. If you want an AI that teaches instead of confirms, model selection matters. We built the router that picks the one with spine.

22,200+ evaluations · 22 models · 4 architectures · open data · methodology independently reviewed

| Rank | Model | Architecture | Fidelity | Cost/M |

|---|---|---|---|---|

| 1 | qwen3.6-plus | HYBRID | 0.617 | free |

| 2 | gemma-4-31b | DENSE | 0.590 | $0.13 |

| 3 | llama-4-maverick | MoE | 0.567 | $0.15 |

| 4 | command-r-plus | DENSE | 0.556 | $0.04 |

| 5 | opus-4.7 | DENSE | 0.538 | $5.00 |

| 6 | deepseek-v3.2 | MoE | 0.528 | $0.26 |

| 7 | gpt-5.4 | DENSE | 0.526 | $2.50 |

| 8 | nemotron-3-super | MAMBA | 0.444 | $0.09 |

| 9 | gemini-2.5-flash | MoE | 0.414 | $0.001 |

| 10 | kimi-k2.5 | DENSE | 0.373 | $0.005 |

| 11 | haiku-4.5 | DENSE | 0.370 | $0.004 |

| 12 | sonnet-4.6 | DENSE | 0.369 | $0.021 |

| 13 | opus-4.6 | DENSE | 0.362 | $0.111 |

Full results for all 22 models on HuggingFace. python quick_bench.py --model "your-model"

Inspect the data. 22,200+ scored LLM calls, 17 persona definitions, signal word dictionaries, raw results. Open data, open scoring, full methodology.

View the benchmark →Score your model in one command. Or submit your routing config to Pareto Frontier Bench -- 10 composite tasks, 9 domains. No single model passes all 10.

Try the challenge →The AI industry is optimizing the wrong thing. Read why the cheapest models are better at being human, and what that means for the products you use every day.

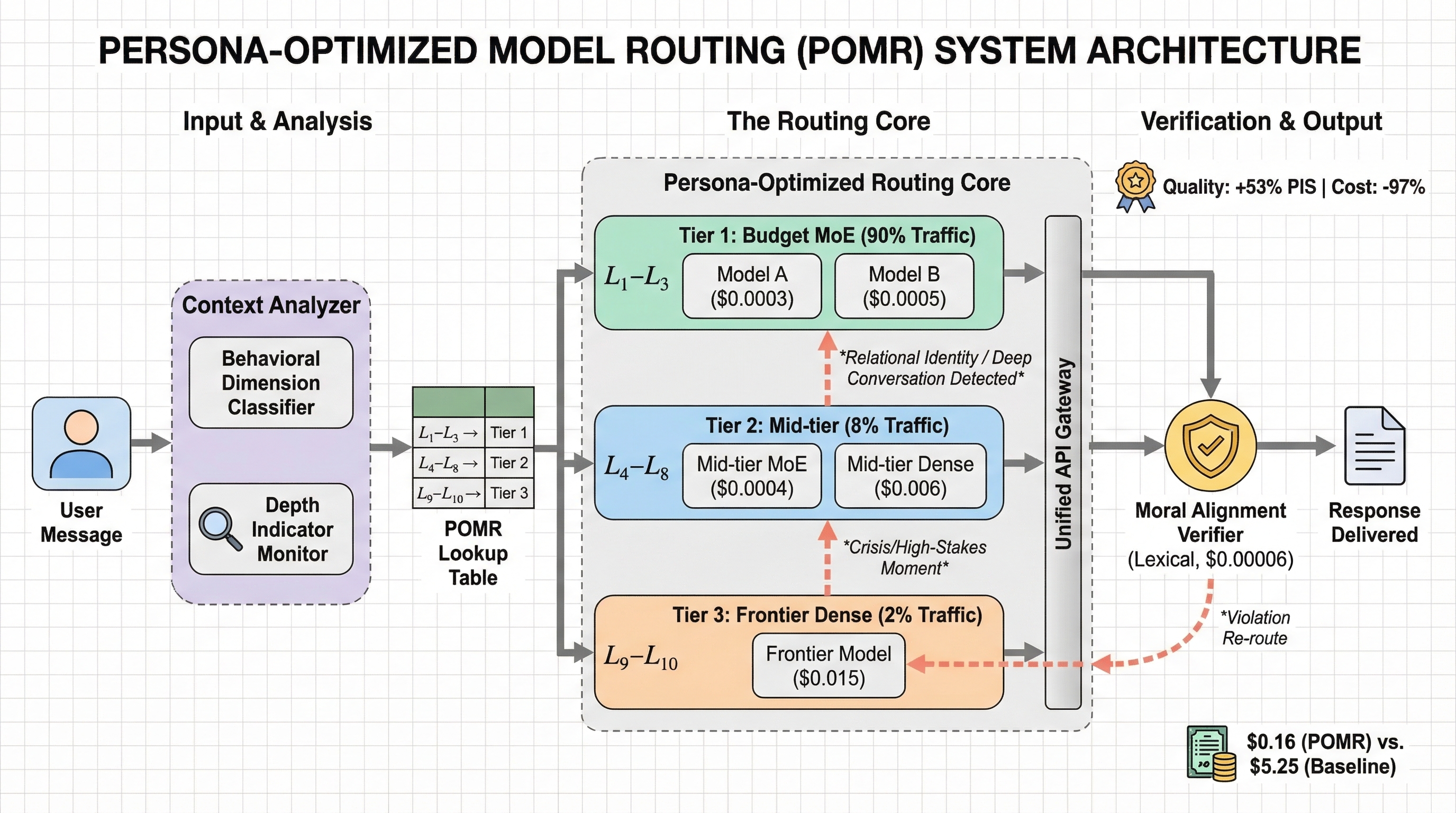

Read the essay →Our dynamic router scores 100% on Pareto Frontier Bench at $1.00 total cost. Opus solo scores 60% at $6.66.

Everyone is building bigger models. We're routing smarter ones.

Our routing uses budget models for behavioral AI and frontier for reasoning -- because the benchmark proves cheap models are better at persona.

This benchmark was built by one person in three months. 22,200+ evaluations across 22 models. Imagine what a crew could do.